Biography

I'm a research engineer at the Institute of Infocomm Research, A*STAR where I get to do research on domain generalization and continual learning. Before this, I spent a few years at Moovita Pte. Ltd., Singapore where I taught machines how to multitask and predict what pedestrians might do next. I graduated with a Master's and a Bachelor's from IIT Kanpur in Electrical Engineering. My thesis on Semi-Supervised Super-Resolution was advised by Prof. Piyush Rai and Prof. Vipul Arora. I am broadly interested in Computer Vision, particularly in representation learning and learning with limited labeled data. In the past, I have had the chance to work under the supervision of Prof. Vineet Gandhi, Prof. Vinay Namboodiri, and Prof. Harish Karnick.

You can check out my publications below. If they seem interesting to you and you’re interested in a potential research collaboration, feel free to contact me by email or Linkedin.

Download my resumé.

- Representation Learning

- Domain Generalization

- Learning with limited labeled data

-

M.Tech. in Electrical Engineering, 2021

Indian Institute of Technology Kanpur

-

B.Tech. in Electrical Engineering, 2020

Indian Institute of Technology Kanpur

Publications

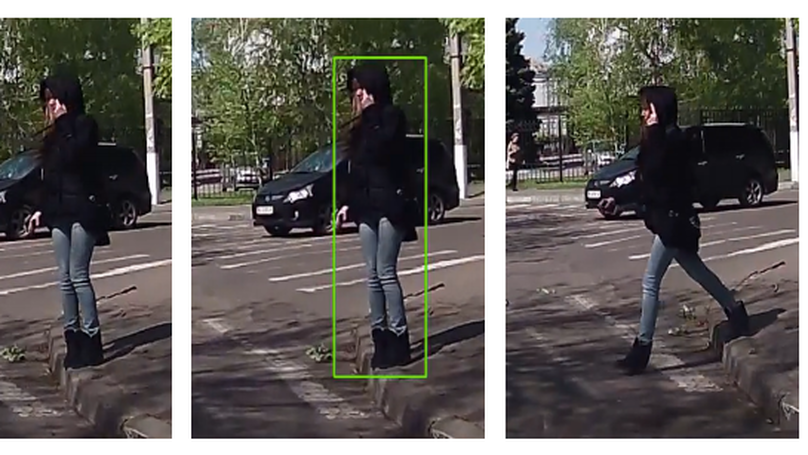

Pedestrians are the most vulnerable road users and are at a high risk of fatal accidents. Accurate pedestrian detection and effectively analyzing their intentions to cross the road are critical for autonomous vehicles and ADAS solutions to safely navigate public roads. Faster and precise estimation of pedestrian intention helps in adopting safe driving behavior. Visual pose and motion are two important cues that have been previously employed to determine pedestrian intention. However, motion patterns can give erroneous results for short-term video sequences and are thus prone to mistakes. In this work, we propose an intention prediction network that utilizes pedestrian bounding boxes, pose, bounding box coordinates, and takes advantage of global context along with the local setting. This network implicitly learns pedestrians’ motion cues and location information to differentiate between a crossing and a non-crossing pedestrian. We experiment with different combinations of input features and propose multiple efficient models in terms of accuracy and inference speeds. Our best-performing model shows around 85% accuracy on the JAAD dataset.

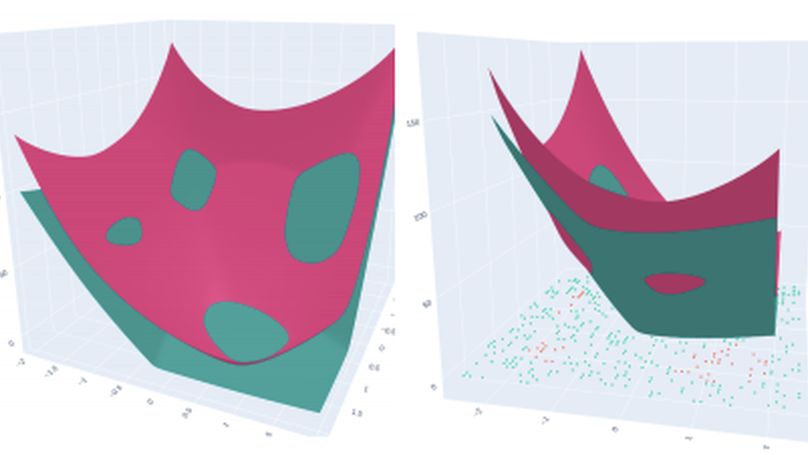

In this paper, we investigate a constrained formulation of neural networks where the output is a convex function of the input. We show that the convexity constraints can be enforced on both fully connected and convolutional layers, making them applicable to most architectures. The convexity constraints include restricting the weights (for all but the first layer) to be non-negative and using a non-decreasing convex activation function. Albeit simple, these constraints have profound implications on the generalization abilities of the network. We draw three valuable insights: (a) Input Output Convex Neural Networks (IOC-NNs) self regularize and reduce the problem of overfitting; (b) Although heavily constrained, they outperform the base multi layer perceptrons and achieve similar performance as compared to base convolutional architectures and (c) IOC-NNs show robustness to noise in train labels. We demonstrate the efficacy of the proposed idea using thorough experiments and ablation studies on standard image classification datasets with three different neural network architectures.

Responding safely to the pedestrians on the road is one of the critical challenges for autonomous vehicles. For smooth navigation of autonomous vehicles in urban environments, it is crucial to predict the pedestrians’ road crossing intention accurately and respond safely. Though motion analysis is a key feature for estimating future trajectories, it may be inconsistent for the small variable actions of humans. For reliable early prediction of the future trajectory of a pedestrian, visual pose and surrounding information are helpful. In this work, we propose a novel approach to determine the intention of a pedestrian by using pose, surrounding context, and bounding box information over a small duration of half a second (last 16 frames). We study the significance of different combinations of these features. We adopt 3D convolution networks, that have shown remarkable performance in activity recognition tasks. In our experiments using the popular pedestrian intention dataset, JAAD, the proposed method achieved over 84% accuracy in estimating the intention of a pedestrian to cross

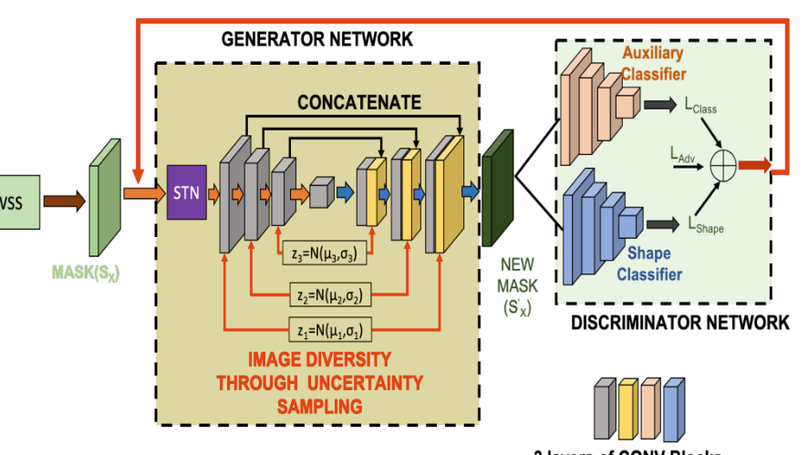

While medical image segmentation is an important task for computer aided diagnosis, the high expertise requirement for pixelwise manual annotations makes it a challenging and time consuming task. Since conventional data augmentations do not fully represent the underlying distribution of the training set, the trained models have varying performance when tested on images captured from different sources. Most prior work on image synthesis for data augmentation ignore the interleaved geometric relationship between different anatomical labels. We propose improvements over previous GAN-based medical image synthesis methods by learning the relationship between different anatomical labels. We use a weakly supervised segmentation method to obtain pixel level semantic label map of images which is used learn the intrinsic relationship of geometry and shape across semantic labels. Latent space variable sampling results in diverse generated images from a base image and improves robustness. We use the synthetic images from our method to train networks for segmenting COVID-19 infected areas from lung CT images. The proposed method outperforms state-of-the-art segmentation methods on a public dataset. Ablation studies also demonstrate benefits of integrating geometry and diversity.

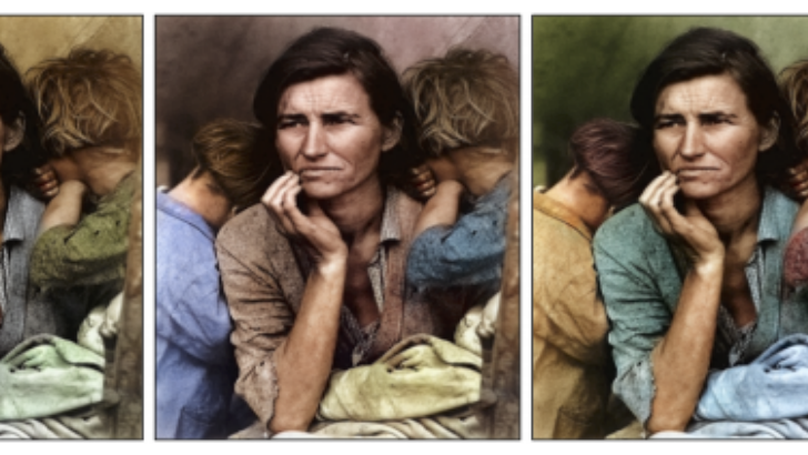

In this paper, we tackle the problem of colorization of grayscale videos to reduce bandwidth usage. For this task, we use some colored keyframes as reference images from the colored version of the grayscale video. We propose a model that extracts keyframes from a colored video and trains a Convolutional Neural Network from scratch on these colored frames. Through the extracted keyframes we get a good knowledge of the colors that have been used in the video which helps us in colorizing the grayscale version of the video efficiently. An application of the technique that we propose in this paper, is in saving bandwidth while sending raw colored videos that haven’t gone through any compression. A raw colored video takes up around three times more memory size than its grayscale version. We can exploit this fact and send a grayscale video along with out trained model instead of a colored video. Later on, in this paper we show how this technique can help to save bandwidth usage to upto three times while transmitting raw colored videos